The Academy’s Scientific and Technical Awards honored those whose discoveries and innovations have contributed in significant and lasting ways to filmmaking. This year’s ceremony recognized 55 individuals and two companies for their contributions to a wide range of fields and disciplines. Each year there are types of scientific innovations that are acknowledged and this year facial MoCap was rewarded. In particular, two teams and their companies were honored: Technoprops and Standard Deviation and Weta Digital.

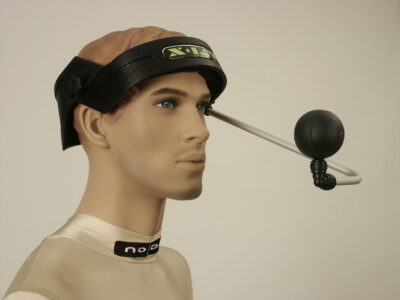

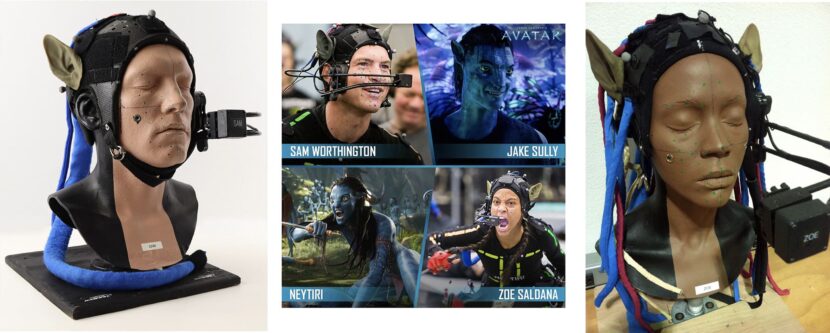

Alejandro Arango, Gary Martinez, Robert Derry and Glenn Derry for the system design, ergonomics, engineering and workflow integration of the widely adopted Technoprops head-mounted camera system. The Technoprops head-mounted camera system, with its modular and production-proven construction, supports consistent face alignment with improved actor comfort, while at the same time permitting quick reconfiguration and minimizing downtime. This system enables repeatable, accurate and unobstructed capture of an actor’s facial movements.

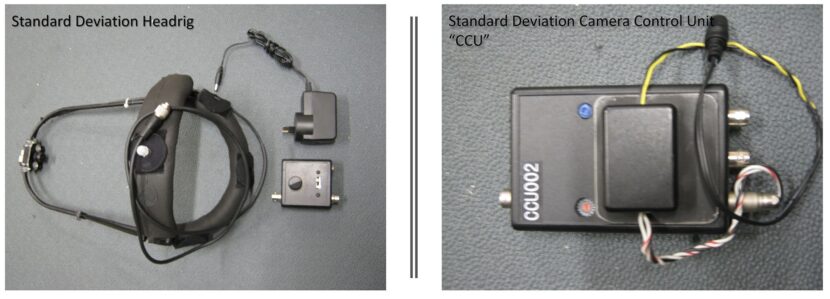

Babak Beheshti and Scott Robitille were also recognized for the development of the compact, stand-alone, phase-accurate genlock synchronization and recording module, and to Ian Kelly and Dejan Momcilovic for the technical direction and workflow integration, of the Standard Deviation head-mounted camera system. The Standard Deviation head-mounted camera system provides a robust method of accurate camera synchronization to the house clock. Combined with practical innovations for usability, it enables multiple head-mounted camera systems to be used in large capture volumes, resulting in adoption by numerous motion picture productions.

In their acceptance speech, the Standard Deviation team singled out Joe Letteri and Weta Digital for their ceaseless support, as both teams have had a long association with the senior visual effects supervisor and some of the team still work at Weta Digital. Both teams were connected to the early work in the field, especially around Avatar and Director James Cameron was on hand for the event, (filmed remotely).

James Cameron is currently making the next set of Avatar sequels in New Zealand with Weta Digital. These films are again deploying head-mounted facial camera MoCap rigs. Cameron is currently directing pick up shots of the Na’vi using the newest cutting-edge technology and the newest versions of the original head cam rigs. In November 2018, Cameron announced online that filming on Avatar 2 and 3 with the principal performance capture cast had been completed.

Technoprops.

The company become part of Fox Studios. Under the terms of that acquisition, Technoprops operated as The Fox VFX Lab and the division opened a virtual production facility in downtown Los Angeles, at which Derry’s team was headquartered. This was pre-Disney Fox merger and today Technoprops continues inside Disney but no longer running the LA stage. Glenn Derry is working apart from the company as a senior virtual production consultant, but still very close friends with his former teammates. Derry himself is in very strong demand as virtual production has taken off and companies around the world look to the ground-breaking work both Technoprops and Fox VFX Lab pioneered. We spoke to Glenn about the history of the head-mounted facial Mocap rigs from his office in LA.

Apart from controlling ‘Teddy’ in Steven Spielberg’s A.I. Artificial Intelligence, virtual production supervising Avatar, simulcam developing and supervising on Disney’s The Jungle Book, and facial capture supervising at ILM on films like Warcraft, – Glenn Derry is also rumored to have once personally installed Tom Cruise’s advanced TV and taught him how to use the remote!

Technoprops is known for more than its high-quality facial capture systems. The company has been a leader in all forms of virtual production pipeline creation and on-set supervision. They are known for their bespoke virtual camera hardware and software as well as augmented reality cinematography systems and on-set operation – aka SIMULCAMDIT, video assist, and dailies pipelines. The team has developed motion base operations and integrated them with CG pipelines. Today the team are leading experts in building and integrating high-end capture LED capture volumes. In the process, they have helped creative teams use technology to make scripts a reality.

Technoprops movie and games credits include Avatar, Warcraft, Avengers – Age of Ultron, Disney’s The Jungle Book, and many more. This year’s award focuses on their pioneering work with facial MoCap head rigs.

Pre-MoCap

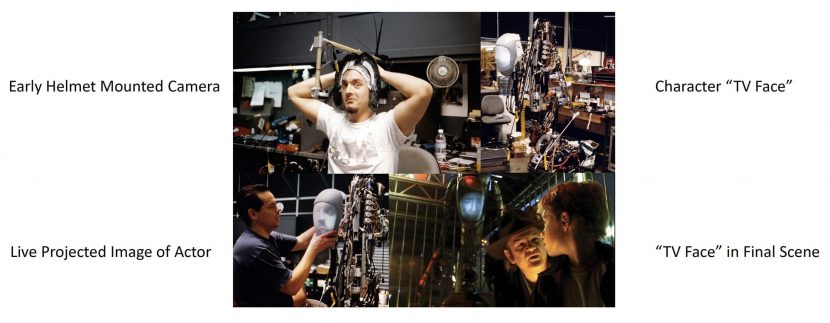

Before there was facial MoCap there had been the limited use of cameras aimed at heads for films such as Steven Spielberg’s Artificial Intelligence (AI).

Avatar

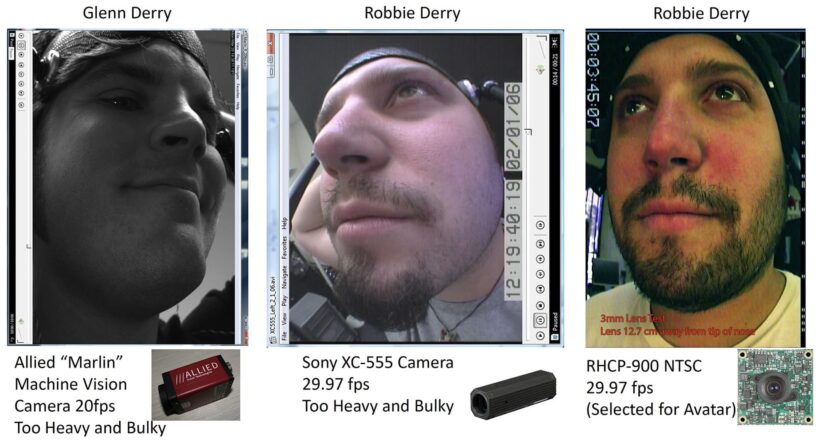

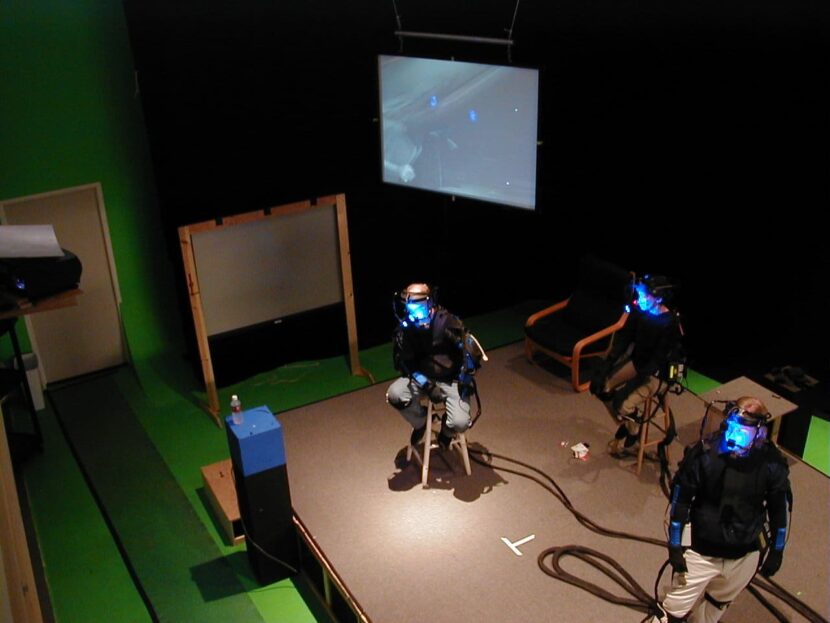

The AI work was done in 2001, but it was in 2005 that the modern capture helmet first appeared, in the early tests for Avatar. In the image below the first test shots of Robbie and Glenn Derry can be seen with a variety of cameras.

The first prototype rig was based on a Soccer Helmet and had a custom boom arm design, but it was too unsteady, and it covered too much of the face. These early tests also involved Standard Deviation, which provided the first camera control units.

2005: The first head-mounted facial capture technology used on a major motion picture on a major scale was Avatar, and it involved several companies.

- STAN WINSTON STUDIOS provided custom fiberglass helmets and vacuform masks using traditional “Lifecast” methods.

- STANDARD DEVIATION (Babak Beheshti) provided the OEM Cameras & Camera Control Unit (CCU) LTC Sync and Burn-In

- PLAYBACK TECHNOLOGIES provided the Raptor 25 OEM Recorders, which were then modified by Technoprops to accept CF Card Media and a Remote REC triggering.

- TECHNOPROPS, who provided the overall mechanical design Integration of the various 3rd-Party equipment and also the camera boom design and cabling. Technoprops were also in charge of the head rigs on-set operations.

Those early rigs were Fibreglass helmets made by Stan Winston, with a lightweight Aluminum Tubing Boom holding a mini standard definition camera and integrated white LED lights. As the rigs went into production for Avatar the design evolved to have ‘break away’ arms. In case the actor or stunt performer hit or hooked the boom on something, the boom arm would break away. This functionality saved both the equipment and the performer on countless occasions.

Along with Glenn Derry, for Avatar, Robbie Derry was the mechanical designer who designed all of the original skullcaps. Gary Martinez was also a mechanical designer and he designed the enclosures and the camera arms that made the camera adjustable and Alejandro Arango was the electronics engineer. The team worked closely on all of the issues and finding ways to make the system workable for James Cameron. The Avatar rig was built based on testing done by the team in early pre-production. This initial test was actually not with Weta Digital. “We did the first test with ILM, and I believe we used some Image Metrics hardware,” commented Glenn Derry. “But it was not super robust at the time. It was just kind of stuff that you would use as an artist at your workstation. We were just trying to hardwire some cameras and so this was just literally lipstick cameras hardwired to recording decks.”

The original “Template shoot” as it was codenamed was supervised by senior VFX supervisors Rob Legato. The test consisted of six shots where Zoë Saldaña as Neytiri is taking to Sam Worthington as Jake Sully at a branch in the forest of Pandora. ILM took the test footage and finished them through their new character pipeline to final shots, to test the virtual cinematography with the facial capture. Derry recalls Cameron’s reaction to the test was “that’s the idea, now go make something that we can actually use day in, day out,” he laughingly recalls.

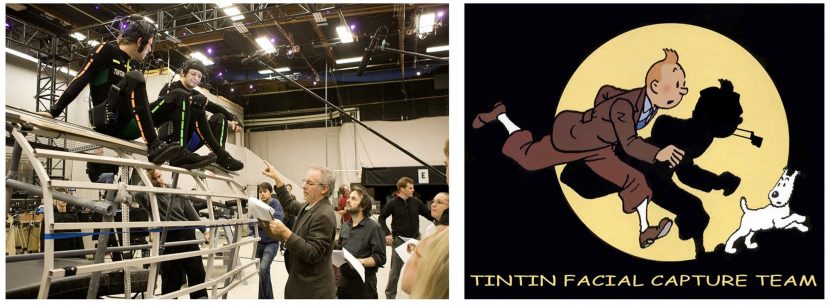

“Glenn (Derry) built the Avatar head-mounted camera rig that became the reference for future facial camera workflows. Glenn’s gear was the first to deliver the wireless video signal for our FACETS facial tracking system,” commented four-time Academy award winner Joe Letteri, co-founder of Weta Digital in Wellington. The FACETS system at Weta captures nuanced details of an actor’s facial performance and figures out how the muscles in the face would have moved. It then maps them onto virtual characters, even if the character has very non-human proportions. It was developed to capture more than a dozen actors in a scene and to allow for body capture to happen at the same time. This meant that directors are able to direct the action in much the same way as they would in a live production. FACETS system has been used on major facial capture projects at Weta Digital including Avatar, The Adventures of Tintin, The Hobbit trilogy, and the Planet of the Apes trilogy.

Standard Deviation helped the Technopros team to turn the original helmet test rigs into working production models. Standard Deviation was also instrumental in building Weta’s face pipeline and continues to this day to be a highly valued technical partner at Weta Digital. While Standard Deviation is only four engineers, the company was been successfully solving MoCap engineering problems for nearly thirty years.

Standard Deviation

Standard Deviation grew out of Chris Walker’s company Modern Cartoons, which pioneered facial animation with individual performer cameras. The team was inspired by the original innovations of Olaf Schirm, a German inventor, and artist. Schirm’s tiny infrared camera head-mounted camera (HMC) rig digitized and mimicked Schirm’s facial expression in a system called the X-IST tracker. This was tested by Sony Pictures and ZDF TV in German and used by Manhattan Transfer in the USA to develop the PBS TV shot Backyard Safari in 1995. Schirm was acknowledged for his ground-breaking work by Standard Deviations co-founder Babak Beheshti in his acceptance speech. It was Schirm’s work that led to the first use of what we think of as a head-camera capture system at Modern Cartoons in LA. When that company closed in 2001, Beheshti and fellow co-founder Scott Robitille established Standard Deviations.

It was Joe Letteri himself who found the small team in Santa Monica and got them involved with Weta’s pitch tests for Avatar. Thus Standard Deviation helped both teams pitch technical solutions to Cameron.

Avatar & Tin Tin

While the film Tin TIn came out in 2011, two years after Avatar, the testing for that film was happening before the principal photography on Avatar. Beheshti worked closely with Dejan Momcilovic on a critical pitch in the USA with Weta that presented their solution to Steven Speilberg, James Cameron, and Peter Jackson, simultaneously. This historic presentation involved demonstrating with Avatar actress Zoe Saldana. Momcilovic recalls proudly fitting the headgear to the actress and thinking she was happy and comfortable, only to walk on stage and have Cameron ask her immediately what it was like, and have the actress casually comment that it was ‘unbearable’. “I was really careful with Zoey, … and I was standing right there beside her, and Cameron turned to me and said – ‘what the hell guys?’ That led to much better designs and all sorts of improvements,” Momcilovic laughingly recalls. Naturally, the fit and performance of the rigs were improved and Weta was the lead VFX house on the film. Those improvements continue to this day, and Momcilovic and Beheshti remain close collaborators and friends.

Dejan Momcilovic is current a motion capture supervisor at Weta Digital. He joined Weta Digital in 2005 as Motion Capture Supervisor for King Kong, he has managed the Motion Capture teams across 15 films. He has also provided a guiding hand in the R&D for the underlying hardware and software technology – managing Weta’s own in-house work as well as the collaboration with its’ outside partners. Momcilovic was instrumental in developing Weta’s FACETS system which itself was honored with a Sci-Tech in 2017. FACETS was developed primarily to serve the needs of Avatar, and it represented a major rewrite to the approach used for the previous film at Weta, King Kong. The FACETS system is very much aimed at just facial performance not generalized motion capture. Avatar was the first time Weta used a head-mounted camera rig for facial motion capture. Previously for Weta’s facial work on King Kong, actor Andy Serkis had been filmed with a camera not attached to his body. Furthermore, Serkis was not acting in the scene (in part due to the scale issues), so his performance was captured “more on par with an ADR process”, commented Weta’s Luca Fascione.

Avatar went through effectively two rounds of helmet development. “By the time we were at actual shooting, we had added things like the ability to adjust the parameters on the cameras remotely using software and things like that,” recalls Derry. The production shot in New Zealand and then returned to the USA and continued shooting in Playa Vista, California at the Hughes Center, which now houses Google’s YouTube.

As Avatar was at a time before widespread 3D printing, the team experimented with doing traditional alginate face cast of the whole cast and then making fiberglass helmets from that which were personalized to each actor.

In the early days of the helmets, two versions were made for each actor, one with a right arm and one with a left. This was to allow the main camera to film a clean unobstructed view of the actor, whichever way they faced. ” Whatever side of the line the camera was going to be on, we would put the actors in the right helmets that corresponded to our ability to get clean reference camera footage – from the correct angle,” commented Derry. “That’s a very Jim (Cameron) thing, by the way. That’s such a Jim-ism .. and now we were going to have two rigs for everyone.”

Since Avatar, various versions of the head-mounted camera have been used on a wide variety of productions. The first 3D-printed helmets were used on The Adventures of TinTin in 2011.

Tin Tin marks somewhat of a divergence between the two teams, but only in terms of projects and propriety solutions. The core of the Technoprops team would, via the various mergers and acquisitions, end up at ILM inside Disney and Standard Deviation has continued to innovate with Weta Digital. Not only do they make specialist head-mounted camera gear but two of the largest capture volumes/sound stages for Avatar 2 & 3 use Standard Deviations bespoke motion capture cameras, as do many games companies around the world.

The Sci-tech award for Standard Deviations singles out their ‘robust method of accurate camera synchronization to the house clock’. This refers to the ongoing contribution that Standard Deviation has made to allowing actors to be captured precisely. It was key in the early days to sync the cameras such that they all were in tight sync. This then needed to be done remotely. While some companies solve this with a wifi signal, Standard Deviations built a system that locks to house sync and then just remains rock steady, drifting less than 300 milliseconds over a 24 hour period. So impressive was this sync lock that Weta relied on it for the external volume capture sync when doing exterior motion capture for the first Planet of the Apes and re-incorporated it into the portable facial capture for The Hobbit. For example, the accuracy of the electronics allows the head-mounted cameras to shoot a frame with a shutter speed that is one 10 thousandths of a second, (to eliminate motion blur) and synchronized to fire a fraction of a second before the camera tracking system pulses.

The other recipient of the award was Ian Kelly. He was part of the production team and on set for Avatar. Ian Kelly also worked with Standard Deviations on Alice in Wonderland some years later. Kelly is credited with informing much of the team’s approach to what was needed. “He was a part of the Avatar production team and he was the one who taught us the importance of good time code and sync hygiene,” comments Beheshti fondly.

Research into face pipelines was not just driven by feature film work. At Weta Digital, Dejan Momcilovic points out that in 2007 the computer game Heavenly Sword, was key in the research done by his team. For King Kong, Mark Sagar had introduced a FACS pipeline to Weta, but right after that project, Andy Serkis brought in the video game project Heavenly Sword, which needed 10 to 15 characters facially solved. To cope with this increased volume, Momcilovic had to find new ways to reliably solve large volumes of facial data. This is how Momcilovic met Luca Fascione, who would go on to be the senior Head of Technology & Research at Weta Digital, where he oversees Weta’s core R&D efforts including Simulation and Rendering Research, Software Engineering and Production Engineering.

Around the world

There have been multiple versions and design iterations of the Helmets using 3D printing and other modern manufacturing techniques, these have addressed both the fit and weight of modern head-mounted camera rigs. The camera systems also moved from standard-def video to HD, and now 2K. All using much more elaborate software to interpret the data. The industry also moved to use a pair of stereo cameras to better judge the jaw movement in three dimensions. The first such rigs were side-by-side camera rigs on films such as John Carter of Mars. More recently they have been positioned vertically in a tight stereo pair.

ILM has done extensive work in refining their pipeline with films such as Teenage Mutant Ninja Turtles, Warcraft, and Avengers Age of Ultron. And the dual camera allowed Guy Henry to become Tarkin in Rogue One and Josh Brolin to become Thanos and Mark Ruffalo to be Hulk in Avengers Endgame, all wearing Techoprop rigs.

Today

Standard Deviations’ newest rigs have a large area of the headspace free to feel the actor from overheating, especially when they are likely to already be in a capture suit. It is also very lightweight, one of the lightest HMCs available.

Weta’s Dejan Momcilovic still works closely with Standard Deviations at Weta Digital, which is hard at work not only with Avatar 2 & 3 but also the planned Avatar 4 & 5.

Technoprops continues to service the Lucasfilm technical community, both with HMC MoCap units and as part of the StageCraft virtual production community. And with the advent of things such as the Epic Games’ MetaHuman, there appears to even more focus on digital humans and high-fidelity capture.